Structured outputs, evidence and freshness

Home · Structured outputs, evidence and freshness

Goal

This page explains how to review an agent structured output before using it in a decision, PM Document, publication or governed action.

Read a structured output

- Read the Summary.

- Read Findings and Decisions.

- Open Evidence and citations.

- Check Source freshness.

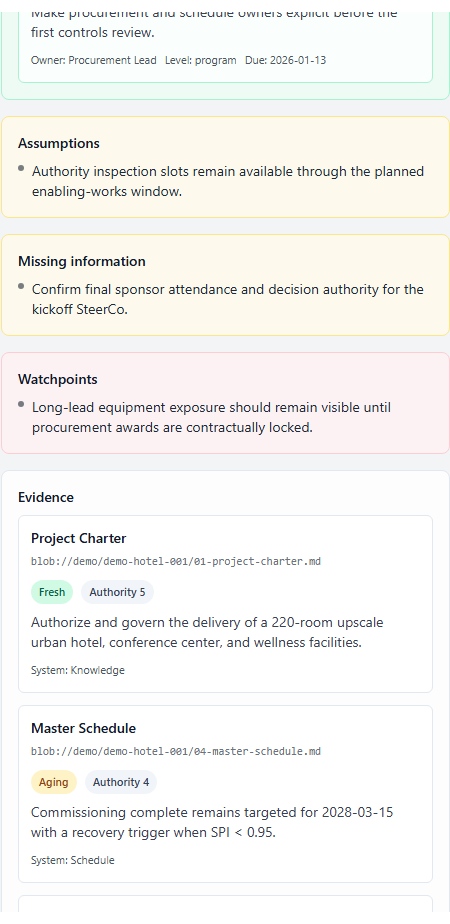

- Read Confidence and Missing information.

- Decide whether the output stays exploratory, becomes a PM Document or needs escalation.

Decision table

| Situation | Risk | Recommended decision |

|---|---|---|

| No evidence | Result cannot be justified | Do not publish; add context or source |

stale source | Source is too old | Refresh, reimport or confirm manually |

conflicting source | Sources disagree | Human arbitration required |

unavailable source | Evidence could not be retrieved | Treat as an alert, not a proof |

| Low confidence | Result is uncertain | Keep exploratory or request review |

| External action proposed | Impact outside ProPM Agent | Use Actions and approvals |

| Shareable deliverable | Versioning and governance needed | Open PM Documents and artifacts |

Human review

AI outputs require human review before publication, sponsor decision, external communication, customer notification, ticket creation or governed action. Confidence is not approval.

Downstream flow

The recommended flow is run → structured output → artifact → version → PM Document → Download / Publish / Add to knowledge.

Support and audit IDs

Fields such as Trace ID, Structured output ID and Context snapshot ID are useful for support and audit. They do not replace business evidence review. Use AI Log and Support diagnostics for investigations.